By Tracy Marie Wamarema

Human relationships rely on emotional vulnerability as a catalyst for deep, lasting connections. When this vulnerability is instead shared with a chatbot, there is limited opportunity to develop real relationships. AI doesn’t just replace interaction; it reshapes how we connect to others. It encourages people to hide their deepest thoughts and share only risk-free information with others. In 2025, the Harvard Kennedy School released an article claiming that we are currently in a “friendship recession.” Solitude has become the default mode and phrases like “my social battery is drained” populate the social media space. However, this tiredness does not actually mean people do not have the capacity for friends, rather, they do not have the capacity to perform for superficial friendships. The innate need to connect, be heard and feel understood is still present.

While in-person interactions have declined, on-screen interactions are on the rise: according to a 2023 Fortune article, the average screen time for teenagers is approximately nine hours. Meanwhile, the U.S. Bureau of Labor Statistics found Americans averaged just 43 minutes a day socializing and communicating in person in recent years. In a 2023 article, Forbes reported that 40% of Americans maintain online friendships via social media. In these cases, AI chatbots play the role of friendship coach and are used to craft the perfect messages and analyze social cues.

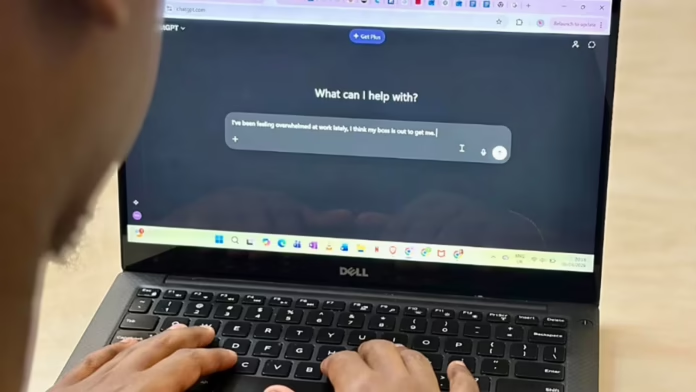

In 2025, Datareportal wrote that more than one billion people use AI each month. While social apps like Instagram took roughly three months to amass one million users, ChatGPT took five days. The former connects humans to each other while the latter connects humans to a program. While some use AI chatbots as tools for daily tasks such as research, others use AI chatbots simply to chat. At Sequoia Capital’s AI Ascent event in 2025, OpenAI CEO Sam Altman said, “Older people use ChatGPT as a Google replacement … people in their 20s and 30s use it like a life advisor.” This was reflected in a recent study conducted by CognitiveFX, whereby all participants admitted to using AI chatbots for emotional support. Similar to why people seek therapy, 22% of participants corresponded daily with AI chatbots to improve their mental health. Whether or not AI was able to help them as a human therapist would is subject to debate.

“The difference between AI and humans is context,” said Charity Wangige, a Kenyan UN intern. “The information the AI is going off is what you’ve told it. There’s a bias that humans can counter that AI cannot.”

For many users, AI chatbots are attractive because of their 24/7 availability, impartiality and privacy. Wangeci Nyaguthii, a 25-year-old Kenyan marketer, spoke to AI about her relationship. “I had consulted my friends, but they didn’t like the relationship from the jump, so I needed a different perspective,” she said. “I felt sort of embarrassed going back to a situation that I knew wasn’t good for me.” Talking to a chatbot erases that fear of embarrassment or judgment which is a common barrier to social connection.

“AI will give you what you want to hear,” said Debbie Murithi, a psychologist working in the mental health department of Kenyatta National Hospital. She saw this firsthand when a patient trusted AI so much that it created competition between their psychiatrist and their chatbot. “They convince themselves that there’s a person behind the screen when there’s actual people all around them.” This patient was drawn to AI use due to community isolation— her family was unavailable and she had no one else to turn to. It’s a catch-22: loneliness drives AI use and AI use deepens loneliness. “The biggest anchor after medication is community and connection. Presence is such a big part of healing with regards to mental health,” said Murithi. She believes AI could be used as a reminder for taking medications or as a search engine for conditions. However, reliance to the point of regarding AI as a person is harmful.

Murithi also notes that accessibility is a key factor in why mental health patients tend to use AI chatbots. “It’s easily accessible and mental health care is expensive,” she said. Therapy costs north of 3,000 Kenya shillings per session, which is more than the average Kenyan can afford. “Another factor is stigma and fear of judgment,” Murithi said. “They turn to AI because it feels like a safe space.” However, because it operates on machine learning, AI rarely challenges one’s negative thoughts. Murithi says it would be more helpful if it could alert the nearest medical center when someone expresses suicidal ideations. At the end of the day, AI should not come at the cost of human connection.